Author:Wall Street CN

A capability that could potentially alter the trajectory of technological development is quietly taking shape within AI labs.When AI systems begin to autonomously develop more advanced AI, humanity's understanding and control over technological evolution will face unprecedented challenges.

According to a seminar report released by the Center for Security and Emerging Technologies (CSET) in January 2026, this process has already begun and may accelerate in the coming years, bringing "major strategic surprises".

OpenAI has publicly announced its plan to create "truly automated AI researchers" by March 2028. Reports indicate that...Leading AI companies are already using their most advanced models internally to accelerate research and development, and these models are often used internally first before being released to the public.

One machine learning researcher who attended the conference revealed that, on carefully selected tasks, the AI model could complete in 30 minutes what would have taken him hours.As model capabilities improve, the scope of R&D tasks that can be automated is continuously expanding.

The core risks of this technology lie in two points:First, human oversight of the AI development process will decline; second, the speed at which AI capabilities improve may exceed human reaction time.

The report warns that in the most extreme scenarios, AI-driven technological improvements could create a self-reinforcing cycle, leading to a "capability explosion"—productivity increases from 10 times to 100 or even 1,000 times that of humans.AI systems will completely dominate the research and development process, with human involvement approaching zero, and the resulting system capabilities may far exceed those of humans.

Some leading figures in the field of AI have warned that this could lead to..."Irreversible loss of human control over autonomous AI systems," or even "massive loss of life and marginalization or extinction of humanity."

Despite the wide range of disagreements among experts regarding the likelihood of these extreme scenarios, the key consensus reached at the workshop was:Such scenarios are indeed possible, and it is worthwhile to take preventative action now.

Since different viewpoints are based on different assumptions about AI research and development, new empirical data may not be sufficient to resolve conflicting viewpoints.This means that it may be difficult to detect or rule out extreme "smart explosion" scenarios in advance.

Leading companies have already used AI to assist in AI research and development.

Automating AI research and development is no longer a theoretical concept.The workshop found that leading AI companies are already using their best models to help build even better ones, and AI's contribution to research and development is increasing over time. Whenever researchers obtain a new generation of more advanced models, these models are able to take on more tasks that previously required human intervention.

Engineering tasks are currently the area where AI provides the greatest value, especially in programming.While the exact productivity gains from using AI-assisted programming are still unclear, tech workers at cutting-edge AI companies actually spend a significant amount of time using AI tools to assist with their work.

In a public document, Anthropic described how its new data scientists on its infrastructure team provide Claude Code with the entire codebase for a quick start, and how the security engineering team uses Claude Code to analyze stack traces and documentation, resolving issues that previously took 10 to 15 minutes now three times faster.

In addition to programming assistants, AI systems also assist AI research and development in a variety of ways.For example, the "LLM as judge" paradigm has been incorporated into many aspects of AI research. This technique uses large language models to evaluate AI-generated outputs and perform tasks that previously required human judgment. It is now used on a large scale for training data filtering, security training, and problem solution scoring.

An employee from a leading AI company who attended the conference described how he is using internal AI tools to generate about 1,000 new reinforcement learning environments to train future models—far more than he could create on his own.

Explosion or Stagnation: Dramatically Different Expectations

The future trajectory of AI R&D automation is the core issue of the report.How high will the level of automation reach? How fast will it progress? How will it impact society?Workshop participants who held strong opinions on these issues tended to fall into two groups:Rapid progress is expected, reaching a high degree of automation and advanced capabilities, or slower progress is expected, with a plateau in the early stages.

The report describes several possible developments:

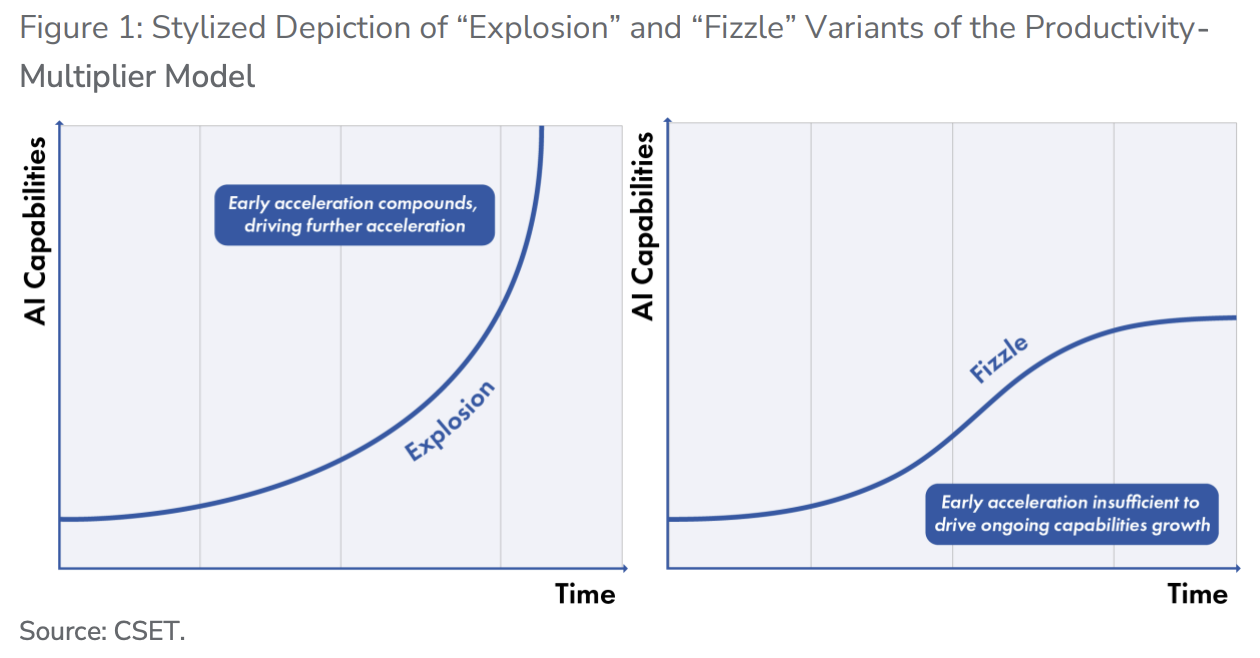

1. Productivity Multiplier Model (Explosive Version)Assuming that the proportion of AI research and development that is automated by AI systems continues to increase, productivity will increase from 120% of that achieved through manual research and development.Increased to 10 times, 100 times, 1000 timesAs these improvements accumulate, progress accelerates further.Human involvement and understanding drop to zero, and AI systems are far more capable than humans.

2. Productivity Multiplier Model (Decreased Version)They argue that although AI research and development is becoming increasingly automated, the scientific output generated at a given level of input (such as computing power) is insufficient to drive further compound improvements in capabilities.Although AI research and development is becoming increasingly automated, its capabilities reach a plateau relatively early on.

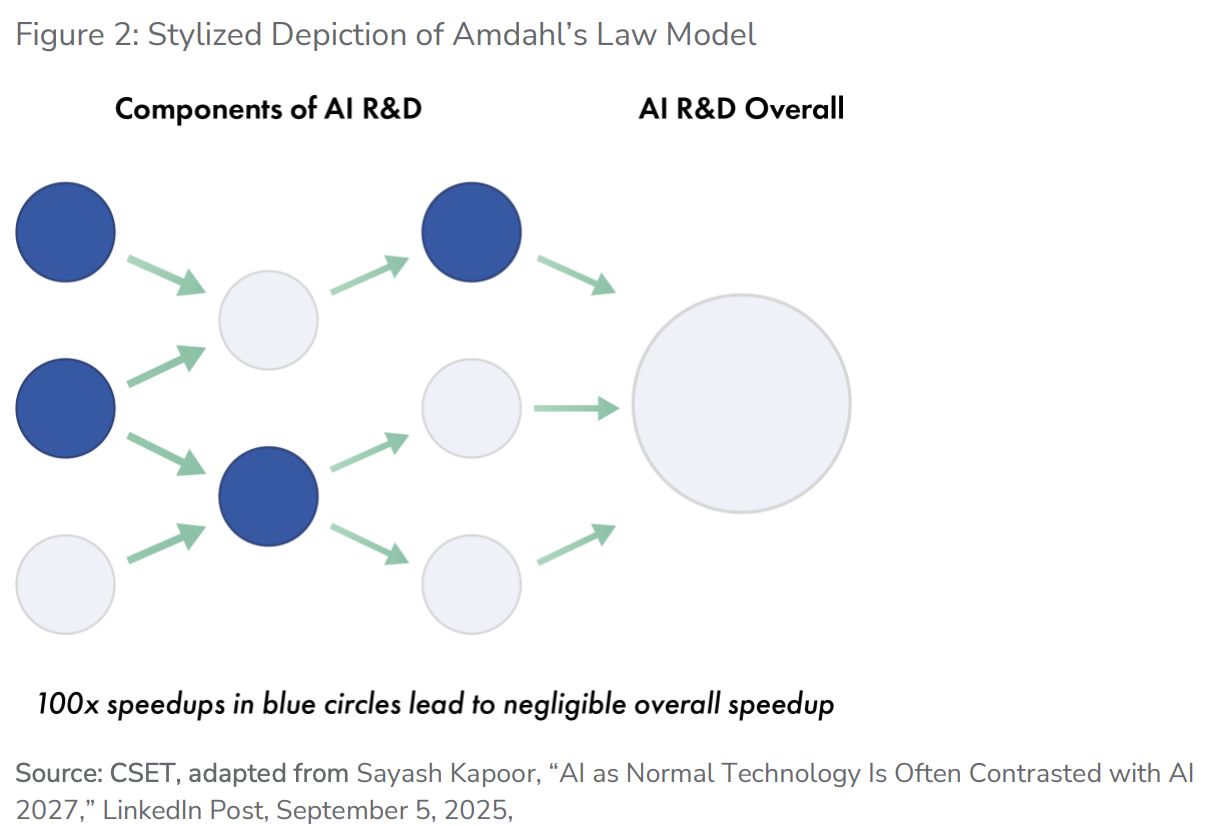

3. Amdahl's Law ModelIt is argued that AI can only automate AI R&D activities in certain specific areas (e.g., writing code and running experiments are automated, but proposing entirely new research projects or operating data centers cannot). Even if automation accelerates certain parts of the R&D process,Overall progress remains constrained by R&D activities that AI cannot automate, thus preventing full automation.

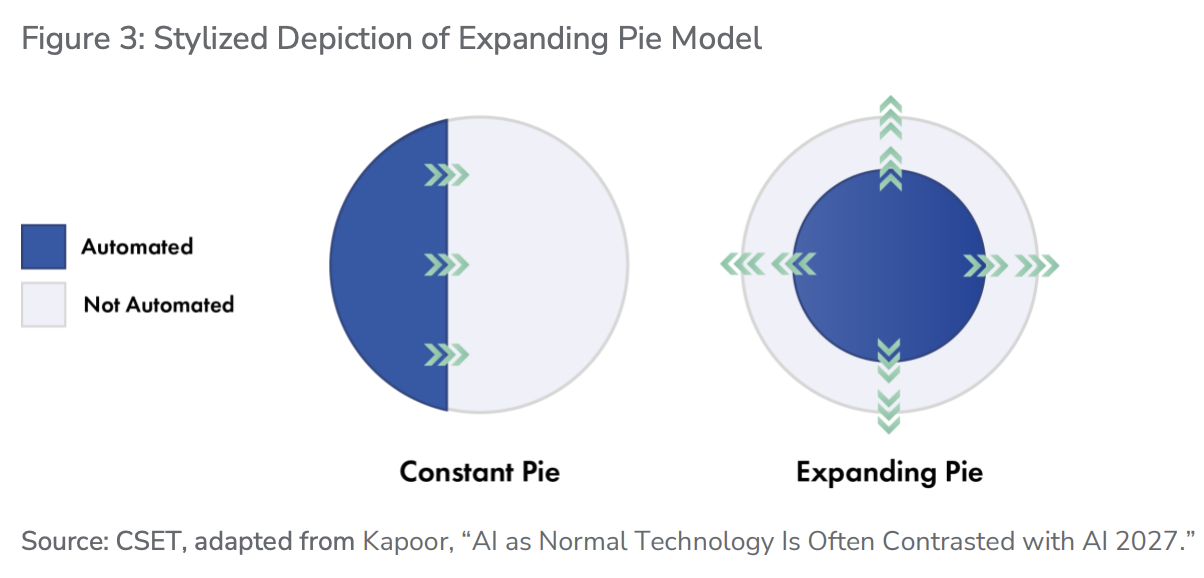

4. Extended Pie Chart ModelIt is argued that as AI automates certain AI research and development activities, human researchers will repeatedly discover that continued progress requires new contributions that AI systems cannot yet automate.AI research and development may progress very rapidly, but humans remain at the core of the research and development process.

The differing expectations regarding which of these dynamics will dominate relate to drastically different answers to the "shape" of the AI progress curve: How fast will AI research and development progress? Will progress accelerate with compound improvements or slow down with diminishing returns? How likely is it that AI capabilities will reach the level of top human AI researchers? If AI capabilities do reach the level of expert humans, what are the performance limits for different tasks after that point? Are there bottlenecks that would hinder the progress of AI research and development?

The report's key findings are:It is difficult to make a decision in advance between two conflicting viewpoints on the automation of AI R&D using empirical evidence.One type is expected to progress rapidly and lead to highly advanced AI systems (i.e., "superintelligence"), while the other is expected to progress more slowly and plateau before reaching human performance in some key areas.

Both perspectives rely on assumptions that allow them to explain why, even when contrary evidence is observed, the situation later returns to what was expected. For example, one side might point to an existing bottleneck, while the other might argue that it's merely a temporary problem that will improve rapidly once resolved.

There is an urgent need to establish a monitoring indicator system.

Despite the potential challenges in interpreting new evidence, participants agreed that efforts to collect and understand metrics of the automated trajectory of AI R&D would be invaluable.Existing empirical evidence (including existing benchmark assessments) is insufficient to measure, understand, and predict the trajectory of automated AI research and development.

The report recommends focusing on three types of indicators.

The first category is metrics that measure broad AI capabilities.This includes performing tasks that would normally require long human time, performing “chaotic tasks” (tasks with imprecise specifications, relying heavily on context, and requiring interaction with people or other dynamic systems), and the ability to absorb new facts, skills, and ideas instantly. Aside from the time span measures being tracked by the nonprofit METR using AI model assessments, few existing metrics capture the progress in these capabilities.

The second category consists of specialized benchmarks for AI research and development, arranged in ascending order of complexity as a "ladder".Software and hardware engineering (programming, debugging, performance optimization, etc.), conducting experiments (implementation, data collection and analysis), creative ideation (proposing experiments and identifying key points), strategy and leadership (determining direction, prioritizing and coordinating).

AI development can only be fully automated once the upper-level tasks are automated, but we may not see a large amount of data on the progress of these tasks until shortly before full automation. Currently, there are no benchmarks for the top two layers.

The third category concerns signs of progress in automating AI R&D within cutting-edge AI companies.This includes the allocation of R&D expenditures, R&D employment patterns, the scale and complexity of tasks delegated to AI systems, the gap between internally deployed and publicly released cutting-edge AI models, the measurement of AI R&D progress, and the qualitative impressions of AI researchers.

Transparency becomes the core of policy

Given the high degree of uncertainty surrounding the automation trajectory of AI research and development, improving the acquisition of relevant empirical evidence is a valuable near-term policy objective.Currently, anyone interested in automating empirical evidence for AI research relies heavily on voluntary information releases from leading AI companies. While these companies do release some relevant data, it is often fragmented and incomplete.

Reasons include: Companies often lack the motivation to invest significant resources in collecting information;Even if the company does collect information, that information may be sensitive (business or other).Companies may have an incentive to selectively share information, such as to support certain narratives in order to attract investment.

A few laws and regulations related to transparency in cutting-edge AI development have recently been passed (most notably the EU's General Code of Conduct for AI and California's Cutting-Edge AI Transparency Act SB 53). But so far, these measures have done little to create transparency in terms of automation metrics for AI R&D.

The policy options proposed in the report include:Disclosure of key indicators (including voluntary or mandatory disclosure, information disclosure to the government or the public), and targeted whistleblower protection.And other policy impacts.

Regarding risk management, the report points out that several AI companies have incorporated automated AI R&D capabilities as a trigger for enhanced security measures in their security frameworks, but these frameworks are still in their early stages.Policymakers should consider whether and how to cover internal deployments, rather than just external deployments, when developing a broad regulatory framework.

High-level automation in AI research and development will also enhance the importance of computing power advantages for companies and countries.If AI research and development becomes highly automated, access to computing power will likely be a crucial factor in determining the extent to which a particular organization can accelerate its AI research.From the perspective of preparing for this possibility, computational power control could allow the United States and its allies to slow down competitors' ability to automate large-scale AI research and development.

Jack Clark, former policy director at OpenAI and co-founder of Anthropic, pointed out thatIf AI research and development enables AI systems to evolve 100 times faster than human-built systems, "then you will eventually enter a world with time travelers who are accelerating away from everyone else." This could lead to a rapid shift of power to faster-moving systems and the organizations that control them. As long as the possibility of this acceleration cannot be ruled out, the automation of AI research and development may be the most existentially significant technological development on Earth.

~~~~~~~~~~~~~~~~~~~~~~~~

The above exciting content comes from

For more detailed analysis, including real-time updates and firsthand research, please join [the group/group].

No Comments