Author:Wall Street observations

Cathie Wood, affectionately known as "Sister Wood," is here with her 104-page annual report, which she describes as a period of "great acceleration," with AI as the driving force. Investors who missed out on innovative assets may find themselves stagnant for the next decade. At the same time, she presents her latest list of "high-stakes bets."

On March 13, Cathie Wood, head of ARK Invest, and her research team delivered an in-depth online video presentation of the 104-page annual report, "Big Ideas 2026," and responded to over 800 market questions collected previously.

In her opening remarks, Sister Wood defined this report as a return to a research paradigm:"This is similar to the kind of research that investment bankers did in the 1980s and 90s when the PC era was just beginning to emerge, trying to glimpse the future of technology. The seeds of that revolution were sown then, and now we are in the midst of a full-blown technological revolution."

The report focuses on five major innovation platforms: artificial intelligence, multi-omics, public blockchain, robotics, and reusable rockets. The Ark team's core assessment is that these five technologies are simultaneously reaching a critical inflection point, jointly driving an investment wave that transcends traditional cycles.

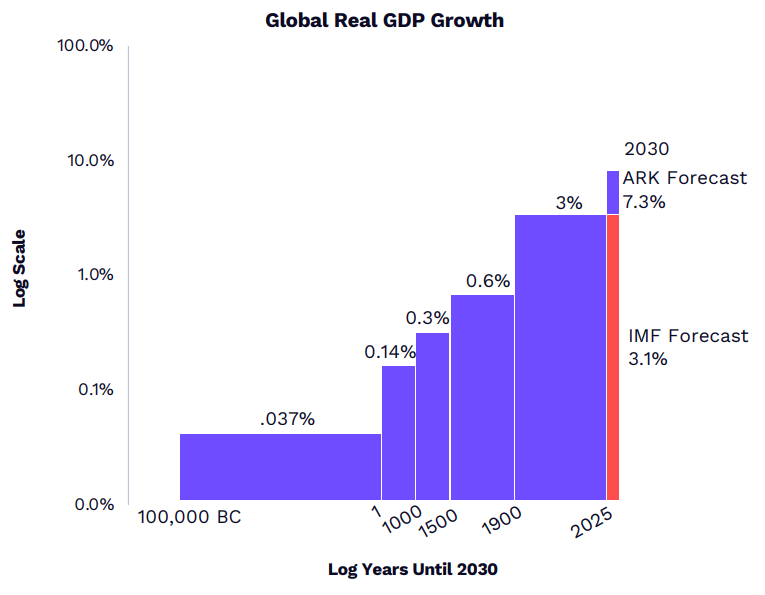

It's worth noting that ARK's chief futurist, Brett Winton, offered an exciting macroeconomic forecast: driven by data center investment and the accelerated deployment of AI agents, the global real GDP compound annual growth rate is expected to exceed 7% by the end of this decade, far surpassing market expectations of 3%. He stated that...Major technological transformations inevitably lead to structural leaps – just as 75% of the US stock market capitalization was concentrated in railroads in the late 1870s, the five major innovation platforms are currently at a similar inflection point in their investment cycles.

Key takeaways from Sister Wood's interview:

Five major innovation platformsDriven by AI, this collaborative approach accelerates the development of multi-omics, robotics, blockchain, and reusable rockets.The evolution and integration of [these elements]. Ark predicts that...By the end of this decade, the global real GDP compound annual growth rate will exceed 7% (market expectation is 3%), and more than 60% of the global market value will be concentrated in disruptive innovation platforms, analogous to the railway cycle of the 1870s.

AI is driving the third revolution in human-computer interaction, from keyboards to natural language, and its adoption rate is twice that of the internet.The reliable task duration for AI agents has jumped from 5 minutes to over 55 minutes, leading to increased willingness among enterprises to pay. The enterprise language model software market alone is projected to reach $7 trillion, enough to support over $1 trillion in data center investment.

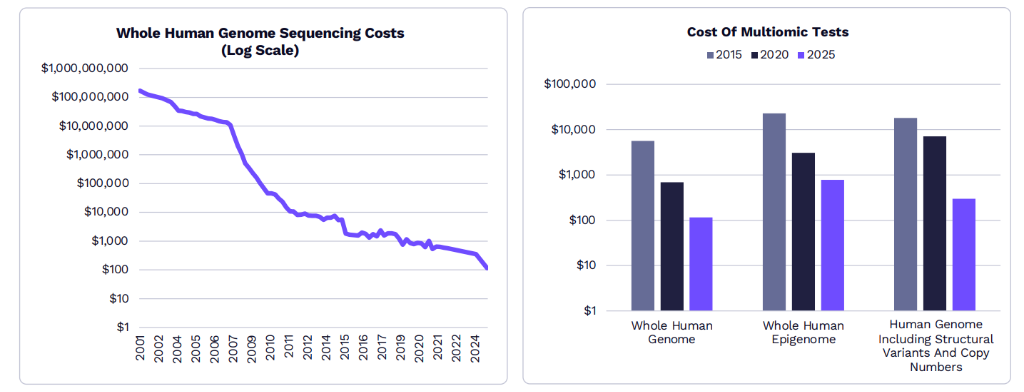

In the field of multi-omics, the cost of gene sequencing has dropped from $3 billion to $100, and is expected to reach $10 by 2030, with the amount of data comparable to that of large language models.AI can shorten the time to market for new drugs by 40%, reduce costs by 4 times, and make curative therapies up to 20 times more valuable than traditional drugs. Moreover, these high-priced therapies have already been recognized by medical insurance.

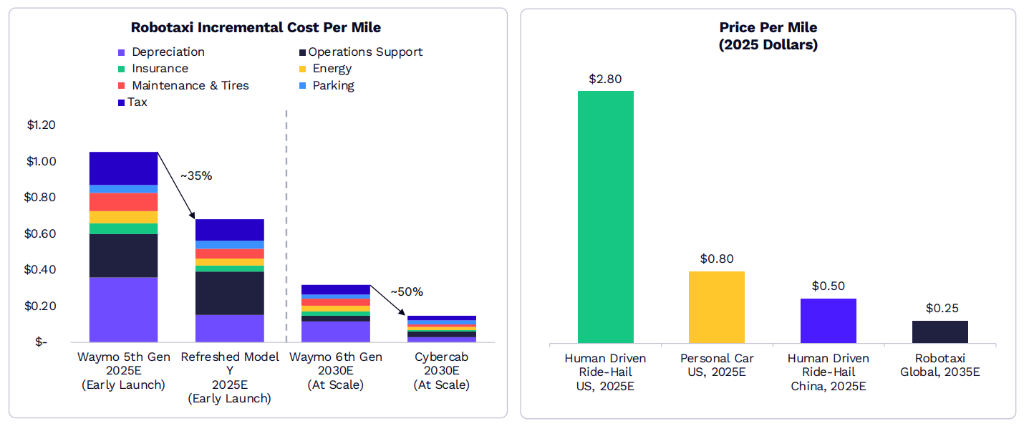

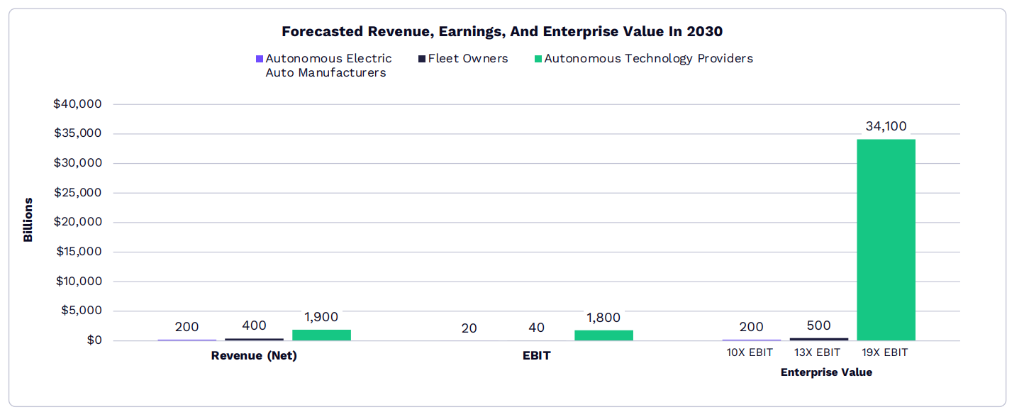

In terms of autonomous driving, the cost per mile can be reduced to $0.25 after scaling up, far lower than that of human-driven ride-hailing vehicles and private cars.The technology is ready, the market size is $34 trillion, and the value will mainly belong to platform operators who master the core technologies. The US and China will be the first to implement this technology.

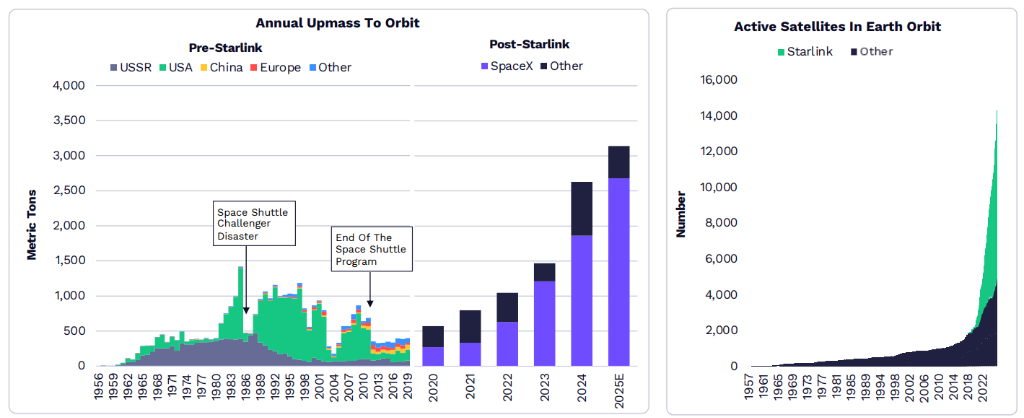

The development of AI is accelerating the demand for space computing power.In the field of reusable rockets, SpaceX has reduced costs by 95%, and the cost of Starship, once fully reusable, is expected to drop below $100 per kilogram, giving rise to trillion-dollar markets such as orbital data centers and global satellite internet.

By the end of this decade, the global economy will have grown at a real rate exceeding 7%.

ARK's chief futurist, Brett Winton, gave the wildest number of the night:"We believe that by the end of this decade, the global economy will grow at a real rate of over 7%."

Winton explained that this prediction has historical precedent. "Every major technological shift triggers a structural change in the underlying equilibrium growth rate." He cited the railroad era of the 1870s—At that time, railroads accounted for 75% of the market capitalization of US stocks—the current rise of the five major innovation platforms will bring about a similar restructuring of corporate value.

The five platforms include: AI, Multiomics, Public Blockchain, Robotics, and Reusable Rockets, which are highly coupled with each other.AI is the "central engine" of it all.

In terms of asset allocation, ARK offered a similarly aggressive assessment—"More than 60% of the global stock market capitalization will go to disruptive innovation platforms." Brett warned that core portfolios not exposed to innovation "may actually shrink over the next decade, while the value of these innovative companies will become enormous."

AIIt's not a bubble; every GPU is being used.

In response to external criticism of over-investment in AI infrastructure, the ARK team addressed one of the most pressing questions in the market.

Frank Downing, ARK AI Research Director, said: "In the 1990s, during the internet era, we laid a lot of fiber optic cables, and then it was dark for many years before we were finally able to use them."Today, every GPU is in use, and there's a shortage. This is a fundamental difference.

Wood picked up the conversation:"Even older generation GPUs have been used."

Frank provided more specific data: GCP (Google Cloud) achieved an annual growth rate of 48% on a revenue scale of $70 billion.The time span for AI agents to reliably complete tasks has increased from 5 minutes in early 2025 to more than 55 minutes now.Cursor (an AI programming tool) achieved twice the revenue that Twilio took six years to reach, using half the manpower and half the time.

Frank further offered his core predictions:Language models designed solely for enterprise knowledge workers will spur a $7 trillion AI software market."This is just the tip of the iceberg. Fields such as multi-omics and embodied robotics will require trillions of dollars in computing power."

AI + Biology Costs Plummet, Multi-omics Revolution Unlocks a 2.8 Trillion Yuan Market

ARK multi-omics analyst Shea calls the fusion of AI and biology "the most profound AI application scenario".

The cost of human genome sequencing has plummeted from $3 billion and 13 years to $100, and is expected to approach $10 by 2030.As costs decrease, testing volume will double, and the scale of biological data will expand tenfold by 2030. Sister Mu added, "The human body has 35 trillion cells; the data explosion brought about by single-cell sequencing will dwarf the data from the previous computing era."

AI is reshaping drug development:ARK anticipates reducing time to market by 40% and development costs to a quarter.The return on investment in drug development has fallen from 30% in the 1980s and 1990s to single digits, but Sister Mu asserts: "We will return to that golden age."

Addressing concerns about payment for high-priced gene therapies, Shea cited CRISPR's sickle cell disease therapy, Casgevy, as an example—costing over $2 million, yet 90% of American patients are reimbursed. The reason lies in the fact that, compared to the cost of lifelong treatment, a "one-time cure" creates enormous value for the healthcare system. The ARK model shows that...AI-driven cures are worth over $2 billion, 20 times the value of traditional drugs. The system could save $52 billion just by treating 7,000 patients with hereditary angioedema in the United States alone.

The market potential is even more staggering: gene-editing therapies for high-risk cardiovascular disease patients have a potential market size of $2.8 trillion. "Capturing just one-twelfth of that is equivalent to Lipitor's total sales over 20 years."

Robotaxi: The $34 Trillion Market Is Rapidly Exploding

ARK Investment Analysis Director Tasha Keeney defines robotaxi as..."The first large-scale physical AI application scenario that consumers will see".

ARK predicts that once the Robotaxi platform is scaled up, the cost per mile could be as low as $0.25, less than one-tenth of the cost of a human taxi in the West and less than half the cost of a self-driving car.

The cost advantage stems from hardware iteration: Tesla's Model Y has a cost per mile that is more than 30% lower than Waymo's fifth-generation model; the upcoming Cybercab is expected to have a cost advantage of up to 50% compared to Waymo's sixth-generation model.

In terms of market size,ARK projects that by the end of this decade, Robotaxi will generate $34 trillion in enterprise value opportunities, with a potential annual revenue exceeding $10 trillion and annual profits of approximately $2 trillion.Currently, Robotaxi has accumulated nearly 1 million miles of driving mileage worldwide.

Safety data has validated the technology: early calculations by ARK showed that the accident rate for autonomous vehicles is about 80% lower than that for human drivers, and this has been verified by real-world operational data. Chief futurist Brett Winton issued a stern warning about the lagging regulatory environment in Europe."Some regulatory actions are tantamount to deliberate murder."

Winton also revealed a macro-level logic of change: the hidden labor costs of manual driving in the United States amount to as much as $4 trillion annually, accounting for more than 13% of the US GDP. Transforming this unquantified activity into tradable economic output is itself a profound economic transformation.

Reusable rockets: SpaceX has cut launch costs by 95%.

ARK autonomous team analyst Dan McGuire pointed outSpaceX has reduced rocket launch costs by approximately 95% since 2008.Currently, there are over 9,000 active Starlink satellites, accounting for more than two-thirds of all orbiting satellites.

The key research framework is ARK's proprietary "Wright's Law": for every doubling of the cumulative mass into orbit, the launch cost decreases by approximately 17%. Currently, the launch cost of Falcon 9 is approximately $1,000 per kilogram, but once Starship becomes fully reusable, ARK expects the cost to drop to below $100 per kilogram.

"Once this proportion is reached, AI space computing will be cost-competitive with terrestrial computing,"Brett said, "Orbital data centers will be 20 to 25% cheaper than ground-based computing." He also mentioned that the launch cost of mass drives, from Starship to lunar bases, could even reach $10 per kilogram."But that would require building a lot of infrastructure on the moon, which in itself is another entirely new infrastructure investment opportunity."

Wood Sister's concluding remarks: AI will not disappear, but will create a completely new world.

At the end of her speech, Sister Mu responded optimistically to the anxiety about the impact of AI on employment: "Many people worry that AI and automation will devour job opportunities, but a whole new world is opening up from this—space is one example, and blockchain and tamper-proof digital property rights are another. We are very excited about the AI era, which will be a pure job creator."

She concluded from an entrepreneur's perspective:"Nowadays, everyone can write code in natural language—go start a business, create your own company."

The following is the full transcript:

Casey Wood (CEO and Chief Investment Officer of Ark Invest):

Hello everyone. I'm Kathy Wood, CEO and Chief Investment Officer of Ark Invest. Today, I'm here with our research and portfolio team to present our "2026 Big Vision." This 104-page research report will be summarized for you today. We believe this research is similar to what investment bankers did in the 1980s and 90s after the advent of the personal computer, trying to explore the future of technology. That planted the seeds of what we believe to be a technological revolution. Those seeds were sown in the 80s and 90s and have been growing ever since. Today, we are in the midst of a full-blown technological revolution that requires original research that attempts to reach into the future and understand what this revolution will bring. Therefore, we are here today to share this original research with you. I'm very pleased to introduce two new members of our team, OID and Shay, who are in charge of our "Multi-omics" theme—which I believe is called "Genomics" in Europe. We believe this is the deepest application of artificial intelligence. From a revenue-generating perspective, autonomous driving may be the biggest application. However, we believe that the genomics revolution and the multi-omics revolution will be the deepest application of artificial intelligence.Let me first introduce OID, who will be hosting this event. OID comes from RTW, where he naturally focuses on the healthcare field, particularly medical devices, diagnostics, and tools. In terms of education, OID holds PhDs in Medical Engineering and Medical Physics from Harvard University and MIT, respectively. His qualifications are well-suited for the field as healthcare and technology continue to converge. So, welcome, OID.

OID:

Thank you, Kathy. It's a pleasure to be here with you all today. Good afternoon. I will be moderating today's meeting, and we are very excited to share "The 2026 Vision" and respond to the more than 800 questions we received. It's great to have some dialogue today and to respond to the ideas we've received. We've narrowed down the 800 questions to about a dozen. I look forward to responding to each one.First, I'd like to introduce the entire team present today. Starting to my right, this is Brett Winton, our Chief Futurist; Frank, our Director of Artificial Intelligence; Shay, whom we've already mentioned, who is also an analyst on the Multi-omics team; Tasha Keeney, our Director of Investment Analysis; and Dan Maguire, an analyst on our Autonomous Driving team. Now, I'd like to start with Brett. Could you talk from a high-level perspective about the convergence of multiple technologies we're seeing, and how they are leading us toward what's known as the "Big Acceleration"?

Brett Winton:

Absolutely. We believe there are currently five major innovation platforms entering the market. Artificial intelligence is the core driving force, accelerating all other innovation platforms. Multi-omics, which Shay and OID will explore in depth. Public blockchains, including stablecoins and Bitcoin. Robotics, including humanoid robots and specialized robots. And reusable rockets, which Dan will discuss today. Let me think, have I covered them all? There's also energy storage, including opportunities in robotaxis and autonomous driving. Okay, I think I've mentioned them all. These five major innovation platforms are all reaching critical tipping points and are fueling an unprecedented investment cycle.If you look at the accelerated pace of our investment in the underlying infrastructure required for technology, you have to go back to the railroad era to see similar levels of technology investment as a percentage of GDP. This isn't just impacting macroeconomic growth in the short term—data centers are driving marginal GDP activity, and the accelerated investment in AI agents is transforming the business landscape. They are also laying the foundation for sustained economic growth, as we will derive positive returns from this technological infrastructure. Overall, we believe this will drive global real economic growth at a CAGR of over 7% by the end of this decade. This may sound far-fetched, but it actually aligns with economic history, where major technological shifts lead to changes in the potential equilibrium growth rate. While the world generally expects us to grow at 3%, we believe there is strong evidence that the macroeconomy will reach a growth inflection point driven by these technologies, all accelerated by AI. Markets will follow the macroeconomy. Therefore, we believe that over 60% of the total global equity market capitalization will be allocated to disruptive innovation platforms. This is the core message I want everyone to understand: you need to embrace innovation. Imagine an unprecedented macroeconomic cycle where growth rates shift, and your core stock portfolio is unrelated to innovation. Over the next decade or so, as the value of these innovative companies skyrockets, your portfolio's value will actually decline slightly. We believe this is entirely possible. Again, this aligns with history. Believe it or not, in the late 1870s, 75% of the U.S. stock market capitalization belonged to railroads. Well, we're in a similar investment cycle, and we believe these five major innovation platforms will generate similar enterprise value accumulation. This is what we call the "Great Acceleration." Okay, OID, ask me a question.

OID:

Okay, Brett. Our first question, and feel free to ask any of our colleagues here if you feel the need, is: Question 1: Are we over-investing in AI infrastructure relative to energy availability? What does this mean?Brett Winton:

I think people are worried about more than just energy availability. They're thinking, "My God, so much money is pouring into AI, how can this be a smart move?" The way we measure AI performance is how much value you and I, as knowledge workers, can derive from the AI software we use. Currently, we believe that if you fully utilize AI, you can achieve a 50% ROI improvement, meaning that for every unit of work you put in, you get 1.5 units of output. Therefore, I should be willing to pay half my salary for that AI software. Of course, companies won't pay half; they might only pay 10%, but that's still a staggering return and a very worthwhile deal for businesses. So, in this context, as AI continues to improve, we believe that in the core scenario, AI software spending will reach $7 trillion, enough to support over $1 trillion in data center infrastructure construction. Energy can be a constraint in specific scenarios, such as building a data center in central Ohio; you have to figure out how to bring in energy and build the data center. Many excellent NeoCloud companies are trying to do this. We don't think this is a global, overall constraint, but it's certainly a friction point that needs to be overcome.Casey Wood:

I'd just like to add one point. The root of this problem lies in the tech and telecommunications bubble and its subsequent collapse in the late 1990s. Consider the background Brett just mentioned: we laid a massive amount of fiber optic cable for the internet age in the 1990s, and that cable lay idle for years before finally being utilized. Today, every GPU is in use, and demand exceeds supply. So, that's a very significant difference.Brett Winton:

That's right, even older GPUs are still in use. The $7 trillion I mentioned earlier only applies to things like text-based language models, primarily helping enterprise employees. There's another huge area, like multi-omics, where there are massive amounts of data we can't process effectively; and then there's physical robotics, including autonomous and humanoid robots, which require even more computing power. We need trillions of dollars worth of computing power, so I think you'll see this trend develop over the next decade.OID:

The next question, I believe, touches on one of the biggest examples in the convergence space space: To what extent are space-based data centers feasible and economical? What technological and commercial milestones are needed for them to compete with terrestrial alternatives?Brett Winton:

Well, Dan is an expert on this, but let me try to explain first, and you can point out any mistakes I make. (Laughter) What I mean is, it's uneconomical to rely on existing launch platforms. SpaceX's Falcon 9 can land, but it has a limited tonnage to put into orbit. Once they have Starship, the next generation of reusable rockets, we think the cost per ton to orbit could drop to a few hundred dollars. Once that threshold is crossed, then space-based AI computing power can become cost-competitive with ground-based computing power. This is significant for reusable rockets because the required launch capacity could increase by 60 times. This is a great example of how the acceleration of AI can, in turn, drive demand for another technology. This also relates to the earlier question: if people in Ohio are vehemently opposed to building data centers, you have another place to put them. This should allow AI computing power to scale, circumventing some local restrictions that might be imposed by political factors.Casey Wood:

I'd just like to add one point. Elon Musk says it's an engineering problem. We've learned that when Elon focuses on an engineering problem, the results are often different. Most people thought powering a car with a cell phone battery was a bad idea, that nobody would do it. But he was right, and he succeeded. So, we're incredibly excited that he's now really focused on the space sector. (Laughter)OID:

That's great. Thank you, Brett. Thank you, Casey. Okay, Frank Downing, if you could tell us about our views on AI, we'd have a few more questions.Frank Downing:

Okay. I'll go into more detail about our AI research. We see this as a generational platform shift. In the past, we transitioned from the personal computer era to the smartphone era. Now, we believe AI is driving yet another generational shift in how we interact with technology. The new user interface isn't moving from a keyboard to a touchscreen, but rather to natural language. We will interact with computers in a fundamentally new way, making controlling computers and technology easier and more powerful. We will see a whole new range of hardware forms enabling this. I'm wearing my Meta Ray-Ban glasses today, which have an AI assistant built in. Comparing this shift to the last platform shift through the internet era (including smartphones and cloud computing), we're seeing adoption twice as fast, or even faster. In just three years, we reached 20% of the relevant population, while the internet took seven years. So, everything is happening incredibly fast, and we can see that in the data. Part of this is due to the dramatic decrease in the cost of AI, the dramatic drop in the cost of training and inferring AI models. This allows the technology to spread rapidly throughout the economy. We believe this creates opportunities for consumers, businesses, and physical robotic forms (which we'll discuss later).Taking the consumer side as an example, we're seeing personal AI agents becoming the preferred entry point for people to access products, services, and information online. People are increasingly trusting ChatGPT or Claude instead of traditional Google searches (and incidentally, Gemini is also worth noting, as Google's adoption rate of AI in the consumer market is second only to OpenAI). We believe this creates new monetization opportunities, including subscriptions we're seeing now, e-commerce platforms where AI agents handle transactions for us (making shopping more convenient), and advertising revenue flowing to the new assistants we're interacting with as attention shifts to AI systems.

Let me quickly illustrate how this works in the e-commerce space. For instance, you can now launch app experiences within ChatGPT, one of which is Instacart. I've long wanted grocery delivery, but manually entering each item into an app was tedious; I preferred going to the store myself—it's easy for me, and hard to change a lifelong habit. But with ChatGPT, which I tried a few weeks ago, you can take a picture of a recipe book, integrate it with Instacart, and simply say "order me," and it gets 90% correct. You only need to change a few things. As the head of the AI lab likes to say, this is the dumbest model has ever been. So this experience will only get better, creating revenue opportunities for Instacart that didn't exist before, because it was work I used to do myself, and now I'm happy to pay for the time saved.

If I were to continue talking about opportunities in knowledge work—that is, the application of AI to businesses rather than personal lives—you may have heard the term "agent" become incredibly popular over the past year, especially since last December, with much discussion about proxy coding and products like Claude Code significantly boosting developer productivity. We believe we've seen this since the release of ChatGPT; AI is good at writing software. But the real turning point came last November and December when models were able to complete tasks with much longer time spans, meaning they are more useful and leverage human time more effectively. No longer needing to constantly monitor them or answer questions every minute or five minutes, AI agents can now reliably work for over 30 minutes. You can see this in various research reports (we've shown it on our screens); the average time agents can reliably complete tasks increased from 5 minutes to 30 minutes by 2025. In fact, the latest data points have already reached over 55 minutes. So, we're seeing this huge inflection point, actually growing exponentially, as agents become increasingly capable, increasing businesses' willingness to pay for them. We looked at the cost of a ChatGPT subscription; the basic enterprise version is $20 to $40 per month. According to the company's report, the cost of paying the monthly subscription fee can be recovered in less than a working day by the time knowledge workers save each day. Therefore, we believe there is still significant room for monetization of AI.

Casey Wood:

I just want to add one point, which answers the first question. You mentioned that inference capabilities really took off, with long-duration task inference capabilities taking off in November, which greatly stimulated the demand for GPUs. Absolutely.OID:

That's great. I think the next two questions touch on both points. First, where does AI create real net new revenue, rather than just efficiency gains that squeeze profit margins? How does Ark distinguish between signal and noise?Frank Downing:

That's a good question. I think the Instacart example I gave is a good one; it's revenue that didn't exist before, it's new revenue being created. At the public company level, we've seen, for example, a surge in demand for computing power, and we've seen this from chip companies. All cloud service providers—AWS, Azure, GCP—have experienced accelerated revenue growth in the past few years. GCP has grown the fastest; for a $70 billion business, 48% year-over-year growth means a lot of new revenue. But I think this question might not just be about the enablers, but about the ultimate beneficiaries and where they see the revenue growth. I think companies like Palantir have played a significant role in demonstrating how this happens, which is why their business is growing so rapidly. For example, in the insurance industry, they work with clients like AIG, who receive thousands more insurance applications than they can actually handle—thousands more than humans simply don't have time to review. They have some prioritization methods, but revenue is still sitting on the table. Now, with Palantir's help, they have AI agents assess and underwrite these previously uninsurable contracts, generating new revenue for the business. I believe this situation exists in every different sector of the economy: there are things we could do, but we don't have the time or resources to do them today. Overall, this creates a situation where AI not only reduces operating costs but also massively expands the market.OID:

As companies like AIG and other insurance companies continue to adopt AI, what will be the biggest bottleneck to AI expansion over the next three years? Electricity, computing power, data quality, regulation, or talent?Frank Downing:

Another good question, and the market has been talking about what the current bottlenecks are. I think the trend we're seeing is that the ultimate bottlenecks are electricity and computing power. I'm putting them together because I think they're both part of the problem. Think about it: if OpenAI wants to launch a new product, or Claude Code is expanding, or Anthropic wants to reach new users, they need GPUs, data centers, grid power, or, like xAI, their own power plants. All of these need to be combined. I think this is the main bottleneck, compared to data or talent, because of the latest models and research trends we're seeing in AI labs: models are increasingly generating their own training data. Human seed ideas and human thinking are important, but then they're expanded with synthetic data generation, and the models themselves are also involved in finding new algorithmic advancements to improve their performance. As OpenAI said about their latest coded model, it's the first model where a previous generation model has played a significant role in training the next generation. This also alleviates some of the talent bottleneck, although talent is obviously very important, which is why a lot of attention is focused on the flow of talent between the four major AI labs. So, my ranking is, first and foremost, computing power, if you have data centers and electricity to get the chips up and running.Brett Winton:

Yes. And you can make trade-offs. Interestingly, people used to say we'd run out of data, but the emergence of MindChain has made us realize that we can actually use additional computing power to generate more data based on existing data. So, if you encounter a bottleneck in one area, you can exchange it for another resource to improve the capabilities of AI.OID:

That's great. Thank you, Frank, and thank you, Brett. We'll move on to the next section, where we'll explore multi-omics with Shay. Shay, you really anticipated the questions in your section, so I thought we'd use your slides to discuss some. I'll jump straight to the first question: As AI accelerates drug discovery and diagnostics, where do you see the greatest value capture in the multi-omics stack? Is it data generation, model development, or treatment commercialization?Shay:

Perfect. Thank you. That's a great question, and I understand the intuition of trying to pick out a layer from the stack, but in reality, we see these components reinforcing each other. AI is truly converging here, acting as a key fulcrum, driving the flywheel of biological innovation. I mean, better data, more data input into better models, better models lead to better diagnostics, better treatment interventions, better tools, which in turn generate even more, richer data. It's essentially a virtuous cycle. We organize this cycle around four key areas: first, multi-omics tools, i.e., obtaining better data at a lower cost; second, molecular diagnostics, enabling earlier and more accurate detection of diseases; third, AI-developed drugs, i.e., using these biological insights to develop better drug candidates and bring them to market faster and cheaper; and finally, curative therapies, i.e., one-time treatments targeting the root cause of disease. So they don't operate in isolation, but rather reinforce each other like a flywheel. This is a key reason why we don't see it as a single layer. What truly accelerates this flywheel is the significant decrease in cost. Looking back decades, the first human genome sequencing project, the Human Genome Project, took approximately 13 years and cost nearly $3 billion, including all the infrastructure required to complete it. Today, you can sequence the entire human genome for just $100. Looking ahead to 2030, we expect this cost to drop another order of magnitude to around $10. This cost curve has truly changed the paradigm of who gets tested, how often, and the amount of data generated. Therefore, it's conceivable that as costs decrease, testing volume will increase. This is a very significant shift; by 2030, we expect testing volume to double. Particularly noteworthy is that the total number of tokens, or data, we have already generated is comparable to the number of tokens used to train large-scale, cutting-edge language models. We expect this scale to expand tenfold by 2030. What I want to emphasize here is that, overall, biology is becoming one of the largest data-generating engines on Earth, driving a true transformation across the entire healthcare field.Casey Wood:

If I may add one point, as a portfolio manager, learning these things is a privilege. But in terms of data generation, I once asked the team, how many cells are in each of our bodies? The answer is 35 to 40 trillion. Now we have single-cell sequencing technology. This gives you a sense of the scale of the data explosion, which will dwarf anything else we've ever seen in the computing age.OID:

That's great. Shay, one point you made is that part of the flywheel's impact on drug discovery and development. The question is: Can AI substantially reduce the cost, duration, and failure rate of clinical trials? What does this mean for biotechnology capital efficiency?Shay:

Indeed, this hits the nail on the head. It brings us back to the idea of feeding richer data into better models. One of the clearest impacts we might see is in the economics of drug development. If I were to frame the problem today, drug development can take over a decade, cost billions of dollars, and nine out of ten candidates that enter clinical development ultimately fail. There's clearly a need for greater efficiency. The dynamic that AI creates is that you can bring drugs to market much faster, generating more revenue from patent protection and reducing costs. There's a real compounding effect. From our own modeling, we can see this could reduce time to market by 40% and the actual cost of developing a drug by four times. This is a very significant shift. The question asks about capital efficiency, which suggests a clear problem: historically, the rate of return on drug development has now fallen to single digits. However, this paradigm shifts when you consider the compounding effect of faster time to market, lower costs, and higher success rates. If you go a step further and apply this to curative therapies, the shift becomes even more pronounced. Historically, early-stage assets have had little economic value, but by contrast, each AI-driven curative drug could potentially be worth over $2 billion. So, to answer the question directly, yes, we are reflecting the significant impact on the capital efficiency of biotechnology in our models.Casey Wood:

Let me add another perspective. The golden age of healthcare was in the 1980s and 1990s, when the return on R&D spending reached 30%, which has now fallen to low to mid-single digits. We believe that returns will return to that golden age, which would be quite unexpected given current market expectations for healthcare.OID:

That's great. I'm somewhat fond of Shay's part, so I'd like to squeeze in another question: As these technologies scale, how should investors consider the regulatory, insurance reimbursement risks, and roadmap for gene therapy?Shay:

Perfect. (Laughter) Let me put it this way, it's a multi-layered problem. Let's break it down, layer by layer. On the regulatory front, especially in the last year, we've seen a lot of changes. In the US, this agency is the FDA, which approves drugs. They've recognized how difficult it is to get drugs on the market, as I just described. So, their idea is to modernize their agency, hoping to work with drug developers to streamline the clinical development process so we can really solve this problem. We've already seen some new frameworks resulting from this, particularly for rare diseases and therapies targeting the underlying biological causes. That's one layer.Another part of the problem is reimbursement and insurance barriers. Of course, I can understand that a $2 million price tag for a cure drug can be shocking, prompting questions like, "How can this be reimbursed? I anticipate insurance barriers." Let's return to a real-world example. Take CRISPR Therapeutics' Casgevy, an approved gene-editing therapy for sickle cell disease and transfusion-dependent beta-thalassemia. It's priced slightly above $2 million, yet 90% of U.S. patients have already received reimbursement access. Why? The reason is that you need to compare the price of this drug with the chronic treatment the patient needs, the hospitalizations they undergo, and its value to the healthcare system justifies the price. This is key to understanding the reimbursement issue. What really stands out is that the economics of cure drugs are fundamentally different from conventional drugs. Here, a cure drug is a one-time treatment; you get all the value upfront. It brings in cash flow earlier, you get more patent protection revenue, and it's possible to avoid competitive overlap. This means that cure drugs can be more valuable than conventional drugs—potentially up to 20 times more valuable in our model.

To be more concrete, let me quickly illustrate this with a case study of hereditary angioedema (HAE). HAE is a rare disease that causes painful and even life-threatening flare-ups of swelling. Currently, patients require lifelong chronic treatment to help control these flare-ups, with lifetime costs potentially ranging from $10 million to $20 million. For example, Antellia Therapeutics is developing a gene-editing therapy that has shown promising clinical data. We estimate its real-world price to be around $3 million, but the value-based price could be three to four times that figure, ultimately depending on efficacy and durability data. If applied to all 7,000 HAE patients in the US today, this would save the healthcare system $52 billion. This underscores the point that even with high upfront costs, you can actually deliver better outcomes for patients, relieving them of the burden of lifelong symptom management, while simultaneously saving the system significant sums of money.

Finally, regarding the scaling part of the problem, because this is very important. Here's a major shift, which I'll briefly highlight because we have a blog post on Ark Invest. A significant shift is happening: gene-editing therapies are starting to move towards "in vivo" editing—editing within the body. This is helping to bridge the gap between rare and common diseases, including the world's leading killer—cardiovascular disease. We're showing here that the value-based price might be $165,000, which is quite different from the multi-million dollar price tags I just described for rare disease therapies, but you still have a huge potential market opportunity. If you only consider the highest-risk patients in the US, multiply that by the value-based price, and you get a $2.8 trillion market opportunity. To put it simply, Lipitor has been a best-selling drug for years, and capturing just one-twelfth of this market would match Lipitor's cumulative sales over 20 years. So, what I want to convey from this multi-omics part is that the true convergence of AI and biology is driving tremendous opportunities and a massive transformation in healthcare. I hope everyone here at Ark is as excited about this as we are.

Casey Wood:

Well, the stock market hasn't fully reflected this yet, but it will catch up. What I'd say is that the biggest surprise for me was that the insurance company had no problem with the $2 million price tag, something I don't think the market even noticed.OID:

That's great. Thank you, Casey. Thank you, Shay. Okay, Tasha, it's your turn. Please tell us about the self-driving car.Tasha Keeney:

Okay, about self-driving cars. Frank mentioned physical AI. We believe this will be the first large-scale implementation of physical AI that consumers will see, and it's already happening today. We already have cars on the road with no one behind the wheel, no one in the passenger seat, picking up passengers and shuttling around. We think one very important point here among the existing players is the underlying cost of the car. This is especially important early in commercialization because when you have a small fleet and are trying to scale it up, trying to convince partners to work with you, the cost of the car really matters, but it also matters for the cost per mile. And cost per mile is exactly what we believe will be the factor that will truly drive demand for this technology and innovation. For example, look at Tesla and Waymo; we've already seen that, on an incremental cost per mile basis, the Model Y is more than 30% cheaper than the fifth-generation Waymo car. We expect this advantage to only increase with upcoming models. Look at Cybercab and the sixth-generation Waymo car; we expect its cost advantage to reach 50%. Again, this is extremely important in the early stages of scaling platforms, but it's also important for competitively pricing these platforms to consumers.Regarding pricing, we believe that robotaxi platforms, once scaled up, could potentially charge as little as 25 cents per mile. This is less than one-tenth the cost of human-driven ride-hailing in Western markets, less than half the cost of driving a private car, and cheaper than ride-hailing in China, which is already very inexpensive at around 50 cents per mile. Therefore, we believe this cost reduction will significantly expand the current ride-hailing market, making low-cost peer-to-peer travel accessible to more people and ultimately making our roads safer. But we believe there is still enormous market potential. We believe that by the end of this decade, robotaxi could create a $34 trillion enterprise value opportunity, which we believe will be attributed to what we call "autonomous driving technology providers" or platform operators. These companies are developing autonomous driving technology internally. They can offer this technology through ride-hailing services and robotaxi services. Casey mentioned that we believe this is also one of the biggest revenue opportunities. The total potential market revenue for robotaxi could reach $10 trillion or more. By the end of this decade, we believe the revenue and profits here could reach around $2 trillion. I won't go into detail about every participant on this slide, but this is just to give you an idea of the companies globally that are exploring this area. As I mentioned, you can see at the bottom that we believe platform operators—the providers of autonomous driving technology—are the ones truly poised to capture the largest share of the market. This is because these are the companies that can drive down the price per mile, thereby truly expanding the market. We're also seeing electric vehicle manufacturers getting involved, partnering with autonomous driving technology providers and ride-hailing companies as potential customer generators. Simultaneously, we're seeing these companies transforming their business models, becoming maintenance service providers for both autonomous driving technology companies and automakers.

All I want to say is that we expect this to revolutionize the entire automotive industry. We believe that many players operating today on traditional gasoline-powered platforms will undergo significant consolidation. We believe the future of robotaxis is electric. Electric vehicles are crucial for achieving attractive cost-per-mile economics again. In the U.S., we're seeing ride-hailing prices capped at around $2.80 per mile, which is quite good. In China, ride-hailing is much more competitive, which is driving many players to markets like the Middle East, where there may be greater profit potential. Robotaxis are already operating today. I'm leaving this information to you. Dan tracks every mile increment we're seeing on these platforms. Today, we've already seen nearly a million miles on robotaxis platforms. So, the question is just when to scale, and scaling is driven by fleet proliferation and, again, lower cost-per-mile costs.

Casey Wood:

I wasn't planning on speaking after everyone else, but I just wanted to add an exclamation mark. I've been to Europe many times, and I've realized that when I talk to people about robotaxis and the incredible research our team has done, they don't resonate because European regulators aren't in place yet. But we believe they will eventually, because the safety statistics are so impressive. In the long run, it would be unethical for regulators to block this trend.OID:

Or to put it more bluntly, I think you should interpret certain regulatory actions as actively killing people.Casey Wood:

(Laughter) Very diplomatic language. But back to the "big acceleration" part, in the United States, the implicit labor costs of our unpaid human drivers exceed $4 trillion annually. Think about it: relative to the US economy of $30 trillion, transforming $4 trillion of demonetized activity into something you can pay others to do for you at a price lower than your time cost, ultimately leads to economic transformation. You're turning an activity that was previously unquantifiable into economic activity—that's GDP.OID:

This answers our first question. Tasha, which regions are most likely to see large-scale autonomous driving deployment first or last? How decisive is regulatory harmonization for commercial success?Tasha Keeney:

Absolutely. Interestingly, the US, because regulation has always been state-level, was one of the first markets to allow large-scale testing of robotaxis, making it one of the first to commercialize them. Now, China is also very focused on the opportunity of autonomous driving, so we're seeing a large number of Chinese players. Therefore, I believe these two markets are the first to reach scale. But as I mentioned, the Middle East is also an attractive opportunity, especially for Chinese players who may see higher profits overseas than domestically. Regarding regulation, Casey mentioned that we've already seen these platforms be much safer than human drivers. Years ago, we estimated that, based on accident rates, robotaxis were probably more than 80% safer than human drivers. Today, we have real-world data from Waymo and other platforms to prove this. Tesla also regularly releases safety statistics for its Full Self-Driving software. So, we know it's already safer. Therefore, the technology is mature. We expect regulation to be important, of course, for allowing its widespread adoption. But we're already seeing it on the road today, and we expect that to happen within the next 5 to 10 years.OID:

We've already discussed how regulation could be a bottleneck in this area. Could you briefly emphasize what you see as the key bottleneck for widespread deployment? Is it regulation, security verification, computing power, mapping, or telecommunications infrastructure?Tasha Keeney:

Yes. Again, the most important takeaway is that the technology already exists. So, it's no longer a technological barrier. However, for robotaxis to truly scale—since they're currently only available in certain cities and the fleet size is relatively small compared to what we expect to reach in about five years—it requires companies like Tesla that can bring cars to market. Waymo, of course, needs to partner with other automakers, and Chinese players need to both build their own vehicles and collaborate with automakers. So, to reiterate, this is where low-cost vehicle platforms really matter: expanding robotaxis fleets and offering consumers an attractive product. Regulation is certainly important, but we already have safety proofs demonstrating that autonomous driving technology is far superior to current human driving practices. So, it's really just a matter of companies scaling up, and as we scale, we expect the cost per mile to continue to be lower than today's ride-hailing prices, which is what truly expands the market.OID:

Thank you, Tasha. Next, Dan. Last but not least, let's move from the increasingly ubiquitous self-driving cars we see in our daily lives to something we might not see often—reusable rockets.Dan Maguire:

Of course. (Laughter) Yes, you'll see even more of them in the future. So, rocket reusability is truly unlocking the space economy. 2025 is a significant year, with a record high annual mass of satellites launched into orbit. This is largely thanks to SpaceX. SpaceX has over 9,000 active Starlink satellites in Earth orbit today, which is more than two-thirds of all satellites in orbit. They have this dominance because they are 10 years ahead of the industry. What do I mean? In 2015, SpaceX landed its first orbital-class booster. Since then, it has performed almost perfectly in terms of partial reusability, while its closest competitor didn't land its first booster until late last year. So, while other companies are still struggling to master partial reusability, SpaceX is moving full speed ahead of full reusability, which really translates into lower launch costs. A key concept in Ark's research is Layt's Law. In the context of launch costs, Layt's Law states that for every doubling of the cumulative mass of satellites launched into orbit, the cost per kilogram of launch decreases by 17%. SpaceX's Falcon 9 rockets to date have proven this. Based on our research, we estimate they've cut launch costs by about 95% since 2008. As a result, it has opened up numerous opportunities in the space age, including the orbital data center Brett mentioned earlier, and zero-gravity testing in the field of fusion to advance medical advancements in the multi-omics field we've discussed. Today, the cost is approximately $1,000 per kilogram. Based on our research, when SpaceX achieves full reusability of its rockets, namely its Starship rocket, we believe the cost could drop below $100 per kilogram. That's when the discussion about orbital data centers will truly get exciting. I know Brett has done a lot of research in this area. At that point in time, we believe orbital data centers could be 25% cheaper than ground-based computing. But there's still a long way to go to get there. We're excited to focus on Starship's progress this year. I think this is a quick overview of reusable rockets. I know we could talk about it all day, but we've covered a lot of topics today, and I'm sure you have some questions that I'd love to answer.OID:

Great, Dan. Thanks. First question: Has reusable launch permanently reset the cost curve for access to space, or does it need to be reduced by another order of magnitude to unlock the next wave of orbital infrastructure?Dan Maguire:

Of course. We have a bad habit here of answering questions before they're even asked, but (laughter) yes, and perhaps just to reiterate, the key is complete reusability. Today, the Falcon 9 has two stages; the upper stage is lost after launch, and the lower stage is recovered. Starship's goal is to have two stages, with both stages recoverable after launch. The key is that this will reduce costs by an order of magnitude. We believe it could reach below $100 per kilogram. Again, the emphasis on orbital data centers, but this really opens up a lot of opportunities to make them economically viable, which wasn't the case before. So, launch cost is truly crucial for all orbital infrastructure in space.Brett Winton:

Yes, just to reiterate, the shift from around $700 for Falcon 9 to around $100 for Starship brought about space-based AI computing. And space-based computing requires at least 10 times, possibly more, the number of satellites needed for communication constellations like Starlink today. So, that alone expands the market by about an order of magnitude. Then, if we colonize the moon or establish a lunar base and can use mass-based launchers, we could potentially service Earth satellite constellations at around $10 per kilogram. This could potentially reduce the cost of getting things into Earth orbit by another order of magnitude. But this would require building a massive amount of infrastructure on the moon. So, that would be a completely different infrastructure investment opportunity.Casey Wood:

I like to say that many people worry that AI and automation will destroy jobs. But we have a whole new world opening up. Space is one, and there's another, which is actually related to blockchain technology and immutable digital property rights. I think we're going to see an explosion there. So, we're very excited about the AI era, and we believe it will create a net increase in jobs.OID:

When it comes to net job creation and long-term change, where does long-term value accumulate in the reusable rocket ecosystem? Is it in launch providers, satellites, satellite networks, or downstream data and services?Dan Maguire:

Of course. I mentioned launch providers and launch costs. We believe the immediate cash-generating opportunity is satellite connectivity. Today, I believe many people have heard of Starlink; they just surpassed 10 million active users. This explosive growth brings back Lett's Law, which is key to our research. We believe that for every cumulative doubling of gigabits per second in orbit, satellite costs decrease by approximately 44%. This is a very steep cost-decline curve, leading to this explosive growth. At scale, we believe this could be a $160 billion annual revenue opportunity. That's why you're seeing so many space companies entering the open market, trying to capture this huge share.OID:

That's great. Thank you, Dan. We've finished all the parts. I have a few general questions here that might be part of the quick Q&A session. I'll throw them out to the panel and see who wants to take them on; multiple people can participate. I'll start with the first one: How will Ark's convergence stack argument unfold across AI, robotics, energy systems, and public blockchains from 2026 to 2030? What are the most significant bottlenecks? We've already asked several questions about bottlenecks today: electricity, computing power, talent, regulation, and capital efficiency.Brett Winton:

Well, I think we've answered the question of how it unfolds across all technology sectors. What I would say is that, from a diversification perspective, Ark's exposure to multiple technologies is important. You might invest heavily in AI enterprise software, but you might encounter setbacks. And these setbacks shouldn't be related to the successful pricing of cures in the multi-omics field in the market. So while AI is an accelerator for all technologies, they have different commercialization friction points and market opportunities. We're adding momentum across all technology sectors and then trying to predict the kinetics that translate into cash flow, which is then reinvested. As for bottlenecks, I do think the world needs more computing power. So, I think whether it's space-based data centers (which is an orthogonal direction that can incentivize computing power) or the continued expansion of US wafer fabs outside of Taiwan, we have chips that can continue to build computing power, and many companies are making a lot of money from it. For example, there's a supersonic commercial aircraft company that will actually benefit from this because its engines are perfectly suited to powering AI data centers. So, Boom suddenly has a huge business powering computing. Capital markets are pouring in, fueling this opportunity. We think this is the most important catalyst of all that's happening. Unit growth is crucial, as Dan has mentioned several times regarding Leighton's Law of Unit Growth.OID:

So, if the problem is a bottleneck, or something that might hinder development—of course, a catastrophe like a global war would be a hindrance. But what's interesting is that even in those difficult times, businesses and consumers are willing to change the way they do things. They're looking for better, cheaper, faster, more productive, more efficient, and more innovative solutions. Ironically, COVID-19 is actually a case in point. Supply chains were disrupted at one point, but now we've emerged from the crisis and are moving forward.Casey Wood:

Yes, what I'm trying to say is that the biggest competitor to disruptive technologies is actually inertia and the status quo. That's the biggest competition. So, I think the world is a bit turbulent, everyone is aware of AI and worried about what that means, and that's prompting people to take action and embrace the technology. This provides evidence that we need to invest hundreds of billions more in computing power here because these companies lack the computing power to provide services to their customers.OID:

Brett, you mentioned concerns. We've certainly heard some of those over the past few weeks. So, what are our thoughts on the future of enterprise software and SaaS in the world of agent-based AI? Which business models will be disrupted, and which will be strengthened?Brett Winton:

Interestingly, you mentioned enterprise software, which seems to be avoided by many right now. However, I believe our view is that AI is transformative for software, but it won't necessarily destroy the entire existing landscape. This is because AI makes creating new software easier than ever before. Some companies will choose to create their own software or leverage internal capabilities to enhance their existing software. But I think it's more likely that we'll see a new wave of competitors emerge from many of the current existing businesses. Rather than everyone building their own CRM, the emergence of many new competitors that are likely more AI-native, more agile, and more industry-specific will alter future expectations for revenue growth and pricing power for existing businesses, which is why the market is avoiding them. We haven't seen many AI-native companies enter the public market yet, but we've seen many sprouting in the private market, spanning many different industries or functions, whether it's Sierra in customer service, Harvey in legal, or Cursor in software development. For example, Cursor just surpassed $2 billion in annualized revenue run rate. This company reached $2 billion in annualized revenue run rate in just three years. In the cloud computing era, reaching $100 million in annualized revenue run rate is a significant milestone. Companies like Twilio, I recall, took six years and had 500 people. Cursor achieved 20 times the revenue in less time, with fewer people. It's possible. Some of these new software companies will be very successful, just perhaps not exactly like those publicly traded today.Casey Wood:

I think it's very important that, despite what Frank just said, we believe a startup explosion is about to happen because we can all program in natural languages now. So, go for it!OID:

With the theme of startup explosion in mind, let's wrap this up for today. Thank you all so much for participating today. We hope you enjoyed learning more about "The Big Vision 2026." If you haven't downloaded it yet, please do so and connect with any of us through the website or social media. We hope you had a great time and wish you a wonderful rest of the day. Let's embrace innovation and move forward.

No Comments